Researchers from Cornell University have created a modular robot that can perceive its surroundings, and then make decisions based on the information it has gathered. This is the first time that a modular robot is able to autonomously reconfigure itself and its behavior based on its perceptions. This technological milestone heralds an age where adaptive, multipurpose robots will soon become the norm.

Hadas Kress-Gazit, associate professor of mechanical and aerospace engineering at the University and principal investigator on the project enthused that their accomplishment opens doors toward a more efficient and sophisticated robotics industry. Currently, robots are created to accomplish only a single type of task. Earlier iterations of these machines saw structures that were both cumbersome and expensive. Scientists strove to design a robot that was humanoid in form, and capable of making basic decisions. However, the human form likewise had its limitations. A human-like robot, for example, would still experience difficulties in search-and-rescue missions.

That said, modular robots, which are composed of interchangeable parts (or modules), are more flexible and versatile. Parts that are no longer functioning can be easily removed and replaced. Further, these robots can adapt to situations better than a human.

The challenge, however, was how to program these modular robots. A human operator would still be needed to manipulate the different sections of the robot. This latest advancement has addressed this problem. Now, a modular robot can look at its surroundings, and reconfigure itself.

These robots are comprised of wheeled, cube-shaped modules that can detach and reattach to form a variety of shapes -- all of which support different capabilities. The modules are designed with tiny magnets that can attach to one another. A WiFi device is used to communicate with the different modules via a centralized system.

While other modular robot systems have been successful at performing specific tasks in controlled environments, this is the first time that a robot demonstrated fully autonomous behavior in an unfamiliar environment.

Kress-Gazit explained, “I want to tell the robot what it should be doing, what its goals are, but not how it should be doing it. I don’t actually prescribe, ‘Move to the left, change your shape.’ All these decisions are made autonomously by the robot.”

This research was published in Science Robotics. Research funding was granted by the National Science Foundation.

A brief history of robotics

The concept of “robots” is nothing new. Technology experts believe that Greek philosopher Aristotle wrote about “automated tools” during his time. Nonetheless, it is generally accepted that robotics, as an industry and as an idea, officially began in 1942 when American author Issac Asimov wrote “The Three Laws of Robotics.” The codes described a robot entity that must always obey its human owners and never injure a human being. (Related: Robot expert predicts the rise of a human-bot hybrid species in the next 100 years.)

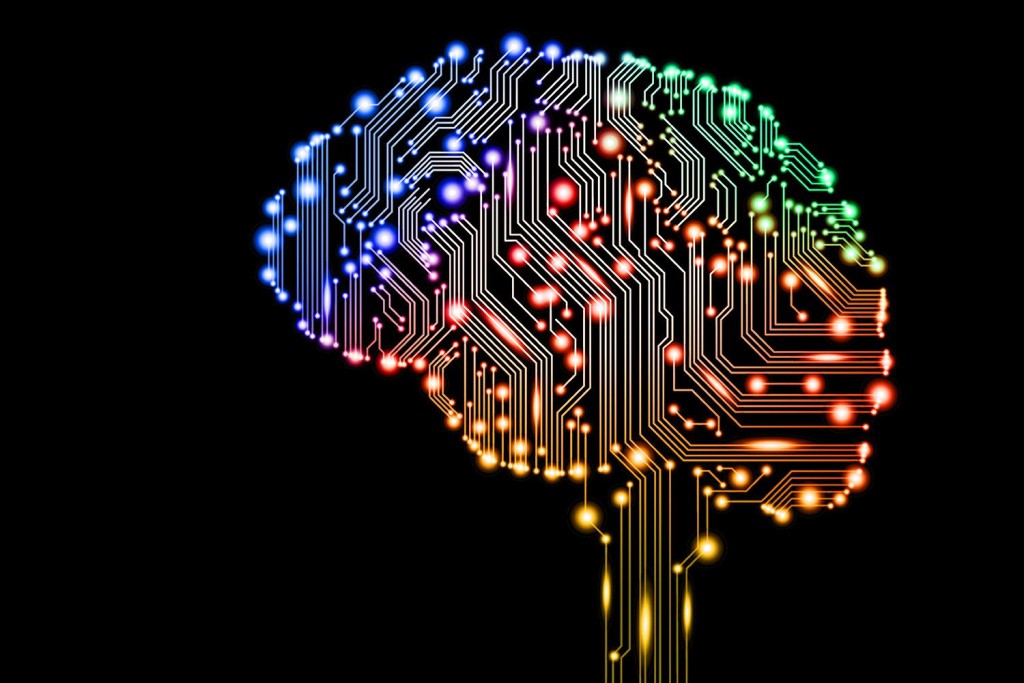

In 1943, Warren McCulloch and Walter Pitts wrote “A logical calculus of the ideas immanent in the nervous activity,” which detailed the possible creation of an artificial neural network that would serve as the “brain” of various computing systems. This idea came to fruition in 1951 when Marvin Minsky and Dean Edmonds created the first neural network known as SNARC (the Stochastic Neural Analog Reinforcement Computer).

In the last 60 years, technology has expanded at such a speed that even AI experts are astonished. What was considered as science fiction dreams (like what was seen in the first Star Wars films released in the 1970s) are now slowly becoming reality. It is not a question now of “if” these technologies will see the light of day, but of “when.”

Sources include:

Please contact us for more information.