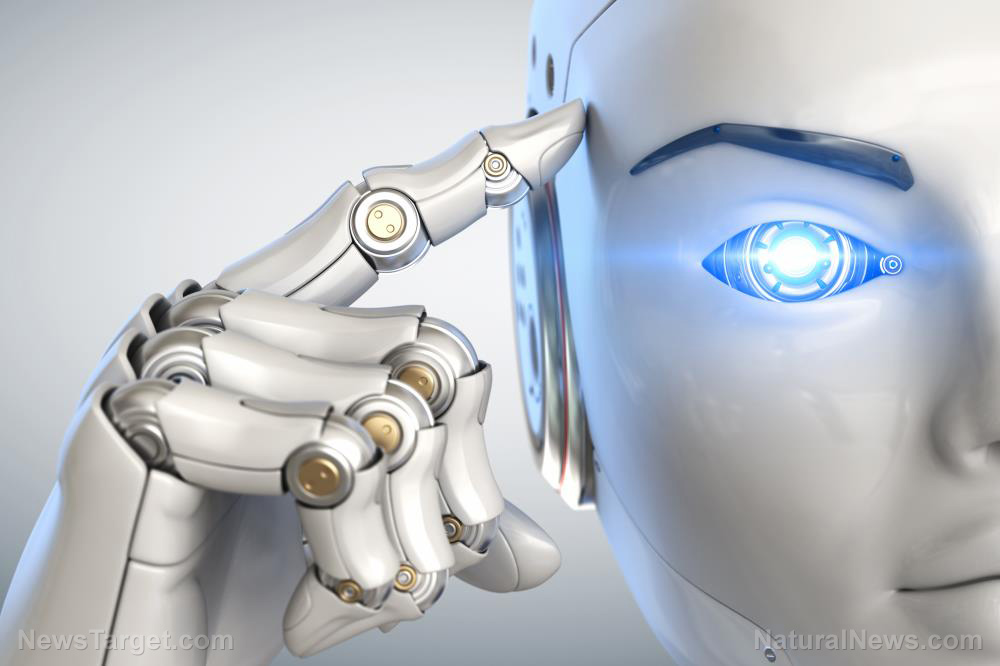

The U.S. military has been working on highly controversial technology for years. Whether we're talking about robots that feed off of dead bodies or invasive spying, there is no shortage of reasons to be wary of the military industrial complex. Now, the U.S. Army is funding research to advance AI technology, creating "deep neural networks" capable of life-long learning. This new AI won't "forget" what it has learned in the past -- and can acquire new "skills" as it's deployed across the battlefield.

While this kind of new technology is promoted as being beneficial to the military, the reality is that we are getting closer to fully automated war. In the bleak and distant future, there lies autonomous war machines that will be capable of fighting entire wars unmanned. What could go wrong?

Making AI even smarter

North Carolina State University researchers teamed up with the U.S. army to create a framework for "deep neural networks" that will enhance artificial intelligence capabilities. The team reports that using their new framework, Learn To Grow, for learning new tasks will enable AI to become better at previous tasks, as well -- a process called "backward transfer."

Currently, AI is plagued by what's known as "catastrophic forgetting," wherein the technology "forgets" how to perform previous tasks after learning something new. Obviously, this poses a significant problem on the battlefield.

Dr. Mary Anne Fields, program manager for Intelligent Systems at Army Research Office, an element of U.S. Army Combat Capabilities Development Command's Army Research Lab, commented, "The Army needs to be prepared to fight anywhere in the world so its intelligent systems also need to be prepared."

"We expect the Army's intelligent systems to continually acquire new skills as they conduct missions on battlefields around the world without forgetting skills that have already been trained. For instance, while conducting an urban operation, a wheeled robot may learn new navigation parameters for dense urban cities, but it still needs to operate efficiently in a previously encountered environment like a forest," she added.

The Learn To Grow project is a continual learning system designed to resolve the problem of catastrophic forgetting -- and according to the team's report, it outperforms previous continual learning efforts.

Smart or scary?

As a press release from Science Daily explains:

To understand the Learn to Grow framework, think of deep neural networks as a pipe filled with multiple layers. Raw data goes into the top of the pipe, and task outputs come out the bottom. Every "layer" in the pipe is a computation that manipulates the data in order to help the network accomplish its task, such as identifying objects in a digital image. There are multiple ways of arranging the layers in the pipe, which correspond to different "architectures" of the network.

When deep neural networks learn new tasks , Learn to Grow conducts "explicit neural architecture optimization via search" -- allowing the framework to piece together the most logical series of "layers" needed to complete the new task. According to the team, this process is what helps prevent catastrophic forgetting.

"This Army investment extends the current state of the art machine learning techniques that will guide our Army Research Laboratory researchers as they develop robotic applications, such as intelligent maneuver and learning to recognize novel objects. This research brings AI a step closer to providing our warfighters with effective unmanned systems that can be deployed in the field," Dr. Fields explained further.

While proponents of this new AI tech are quick to sing its praises, there are very real concerns about how this technology could end up going awry. The U.S. military has come under fire repeatedly for engaging in shady activities: Testing chemical sprays on unsuspecting civilians, weaponizing social media against political adversaries and Big Brother-style spying, just to name a few. The military has even tried to build robots that eat dead humans for power.

The advancement of AI learning can very easily be misused, if the wrong people are in charge.

See more coverage of tech nightmares at Glitch.news.

Sources for this article include:

Please contact us for more information.